Sonification - Increasing the senses, increasing inclusion

Objectives

The human brain is still by far the most powerful tool for the perusal of large data sets and, at the end of the chain, these data sets are ultimately analysed by humans. The space-physics community is in need of new methods to facilitate a more dynamic and detailed inspection of large data sets.

As part of our strategy to engage citizens in online frontier science, through our dedicated work package - WP7 Increasing The Senses, Increasing Inclusion – we aim to take these efforts one step further.

The idea here is to expand the senses used in scientific inference beyond that of the purely visual, to include both in the Project’s overall effort and scientific community, the sense-impaired and, in particular, the visually impaired, through specially developed, user-centred software to analyse scientific data presented in tabular format, through both visual and sonified representations. An exciting aspect of this is that the use of the sound for data analysis can prove to be at least as effective as visual inspection and in some cases, data mixed with noise is better characterized using sound display.

Cognitive, neurological and machine-learning systems and the like, may be defined as capable of learning from their interaction with data and humans; essentially continuously re-programming themselves. These digital systems do not assess the risk of taking the user out of the loop, removing the ability to perceptually inform the user of uncertainty. Multi-sensorial perception, on the other hand, provides the option of risk assessment (regarding decision taking) and provides the data analyst with full control over the data, as well as the possibility to explore, interpret and take decisions.

In parallel, we are also looking to capture also different perspectives from citizens, artists, artisans and senior citizens, with particular attention to gender, and with a general goal to promote citizen-science methodologies of critical thinking in society.

SonoUno

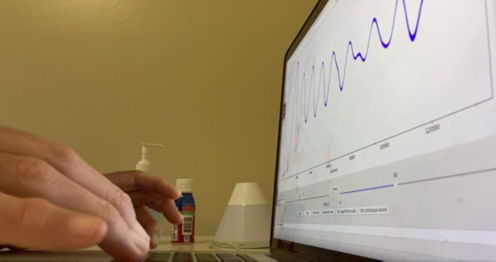

The development of sonoUno, a user-centred software enabling people with different sensory styles to explore and analyse scientific data presented in tabular format, through both visual and sonified representations, is at the heart of this multi-sensory approach to allow people with different sensory styles to explore scientific data, visually and by sonorization, and make science.

The sonoUno software was initially developed based on the study of other relevant software packages, such as Sonification Sandbox, MathTrax and xSonify, and following standards of accessibility like ISO 9241-171:2008 (Guidance on software accessibility). Two versions of the software have been developed within REINFORCE: a browser-based version, accessible via the web; and a desktop version. A project is currently underway to attempt to unify the code-base of the two separate versions to streamline the development workflow at a later stage of REINFORCE. A new domain has been set up for sonouno to outlast the REINFORCE Project: https://www.sonouno.org.ar/ and sonoUno

Therefore, in same way our citizen scientists are invited to Zooniverse, here we invite them to sonorise and discover how to detect a signal with a sound

Training Materials

Hand in hand with the software development, we are developing twelve training modules as part of our sonorisation activies and therefore three modules for each of the four demonstrator projects developed within REINFORCE, including:

- a module on the use of the demonstrator project in Zooniverse;

- a module on usage of the Zooniverse demonstrator project within sonoUno;

- a separate module on data-analysis using data from the demonstrator project.

Together these modules build the complete training course, which can be accessed here:

- Module 1 - Using the GWitchHunters Zooniverse demonstrator;

- Module 2 - Using GWitchHunters gravitational-wave data in sonoUno;

- Module 3 - Using GWitchHunters gravitational-wave data;

- Module 4 - Using the Deep Sea Hunters Zooniverse demonstrator;

- Module 5 - Using Deep Sea Hunters data in sonoUno;

- Module 6 - Using Deep Sea Hunters data;

- Module 7 - Using the New Particle Search at CERN;

- Module 8 - Using New Particle Search at LHC data in sonoUno;

- Module 9 - Using New Particle Search at LHC data;

- Module 10 - Using Cosmic Muon Images Zooniverse demonstrator;

- Module 11 - Using Cosmic Muon Images data in sonoUno;

- Module 12 - Using Cosmic Muon Images data.

Modules 1, 4, 7 and 10 are dedicated to using the demonstrator projects, within the Zooniverse platform – GwitchHunters; Deep Sea Hunters; New Particle Search at LHC; Cosmic Muon Images and provide a grounding in the science behind the demonstrator project, as well as information on the collaborations that carry the science forward. They use walkthroughs of the demonstrators and explanations of how the classification procedure within them works.

Within the sonoUno and data modules - Modules 2, 3, 5, 6, 8, 9, 11 and 12 - users will be introduced to the sonoUno software and concepts and will receive a walk-through of the functionality, prior to concentrating on an analysis of sample data from the individual demonstrator projects. The core objectives of these modules are to:

- develop experiments that are designed to research perception techniques;

- develop experiential-hands-on modules targeting peoples with disabilities and senior scientists;

- encourage engagement; to develop trust, to make students feel welcomed, focussing on the person and on their abilities;

- promote acceptance of the multi-sensorial exploration of data and detection and identification of signals and events.